Plus, the techniqure poorly suited for real-time applications like video games where speed is critical. Still, it must be known that this at a greater computational cost. What’s nice is that this technique allows for higher levels of realism compared to other rendering methods. Read More: OTOY’s light field demos are an amazing step forward for VR and AR His charisma and passion for the project/trick was felt through the entire talk, capping off nicely with Kobayashi throwing a Google Cardboard into the audience and yelling “ Have fun!” Kobayashi’s presentation, despite being only about 6 minutes, was the highlight of the night (in my opinion). This may not seem like much to an non-developer, but this is a brilliant way to get Pixar animators inspired enough to create virtual reality content. Then, the person will put a square plane with a shader attached in front of the scene. To make this concept work in Renderman, a developer takes the scene and places the camera outside of it, rather than inside. See the diagram below, shown by Mack Kobayashi during his presentation. To make it stereo, one must position one camera and ray trace it in a circle from two points per section. Again, this method is still flat though – hence it is not stereo (so no depth). Then, the developer ray traces in a sphere all the way around the environment- making the viewer feel like they are inside. This where a person takes the “camera” and puts it in the middle of a scene.

To get closer to something more immersive, onmidirectional rendering must occur.

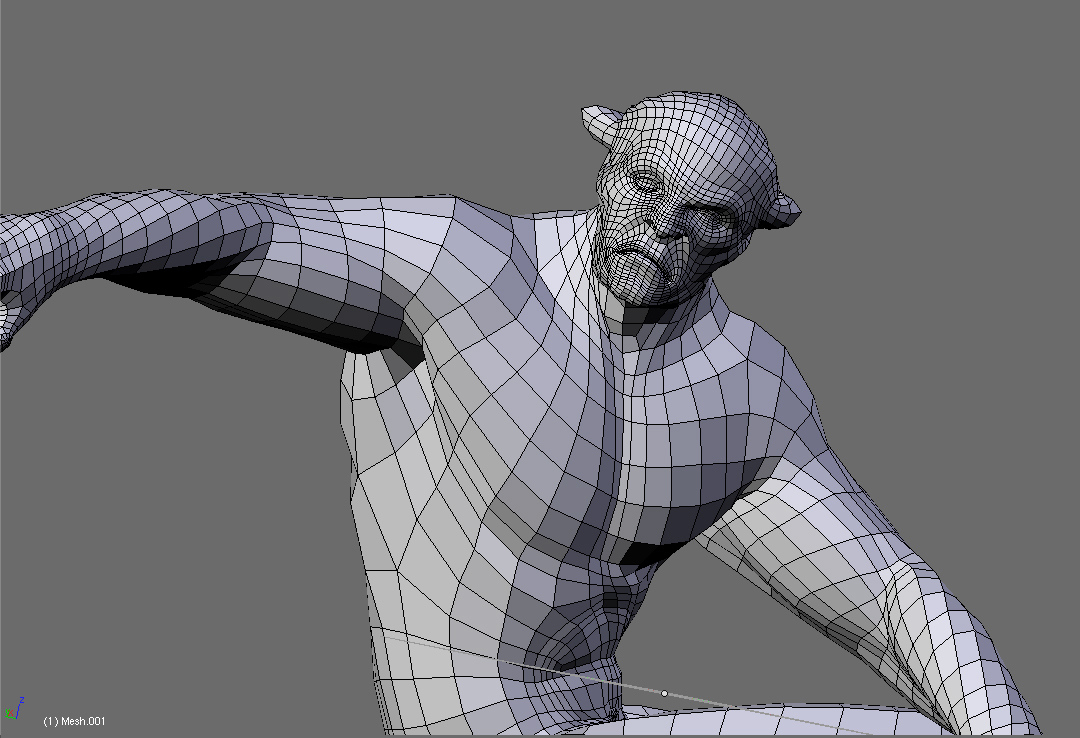

However, it is not quite what the VR community is looking for. For stereo, you pretty much do the same thing, but with two cameras instead of one. Traditionally, a standard 2D render starts with a single camera and then the developer will ray trace everything. The goal for this VR-enabled rendering trick was to produce a panoramic 360 view in stereo. To understand what the trick/hack does, one must get familiar with Ray tracing, which is a technique for generating an image by tracing the path of light through pixels in an image plane and simulating the effects of its encounters with virtual objects. This type of rending technique is capable of producing a very high degree of visual realism. Kobayashi also let the audience know that he previously worked at Pixar as an Effects Animator for a few popular movies – making him a perfect fit for a presentation related to VR, Pixar and Google. This year, following a talk about how to model 3D objects in Renderman, Mach Kobayashi got on stage and introduced himself as someone who works at Google.

The innovative approach surfaced during during a popular segment dubbed “Stupid Renderman Tricks” during a night of presentations. For the past few years the folks at Pixar have been organizing User Group Meetings during SIGGRAPH where RenderMan professionals and enthusiasts meet to learn about the latest developments from the RenderMan community.

With a few segments of code and an understanding how omnidirectional stereo imagery and ray tracing works, those developing on the Renderman platform can begin exporting animations for virtual reality. In a surprise “announcement” at a SIGGRAPH Pixar afterparty, software engineer from Google Mach Kobayashi presented a way to render graphics into a virtual reality format using Pixar’s proprietary in-house software solution.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed